Hi, I'm Anuj Dutt.

I am a Machine Learning Engineer at Adobe, building cloud-scale GenAI systems for Acrobat. I focus on the "system around the LLM": prompt and workflow design, multi-agent orchestration, evaluation loops, and production hardening, plus mentoring and driving technical alignment across partner teams. At Adobe, I've shipped across PDF Spaces, Contract Understanding, and Creation initiatives.

Previously, I spent 3+ years at Jabra in AI Systems, taking edge models from research to production with hardware-in-the-loop optimization, achieving a 16% reduction in model memory and 20%+ performance gains across in-market products. For a next-gen product, I drove the SoC platform decision end-to-end: translated real-time AI constraints into platform requirements, evaluated multiple vendors, benchmarked candidate SoCs, and delivered a clear recommendation to unblock the product architecture. I also developed the core algorithm and shipped the first Intelligent Meeting Spaces feature, letting teams define a meeting boundary so only people inside the specified area are included.

Earlier, in Bose’s Consumer Electronics Applied Research group, I built EdgeAI for the Bose AR ecosystem, helping deliver Bose’s first on-device DNN on mobile as part of the BoseAR library and training an IMU-only gesture recognition model (10 gestures) for Bose Frames, leveraging its 9-axis head-motion sensor stack. I also designed privacy-focused ML pipelines and partnered with hardware platforming teams to deploy ML on battery-powered edge devices and mobile. In parallel, I built rapid prototypes to validate new on-device interaction concepts ahead of productization.

I'm currently pursuing Stanford GSB's LEAD program at the Stanford Graduate School of Business.

Recent Activity

Understanding LLM Context Length: What It Really Means and Why It Matters?

A deep dive into why context length exists in LLMs, what breaks when you exceed it, and the architectural constraints that make training long-context models expensive.

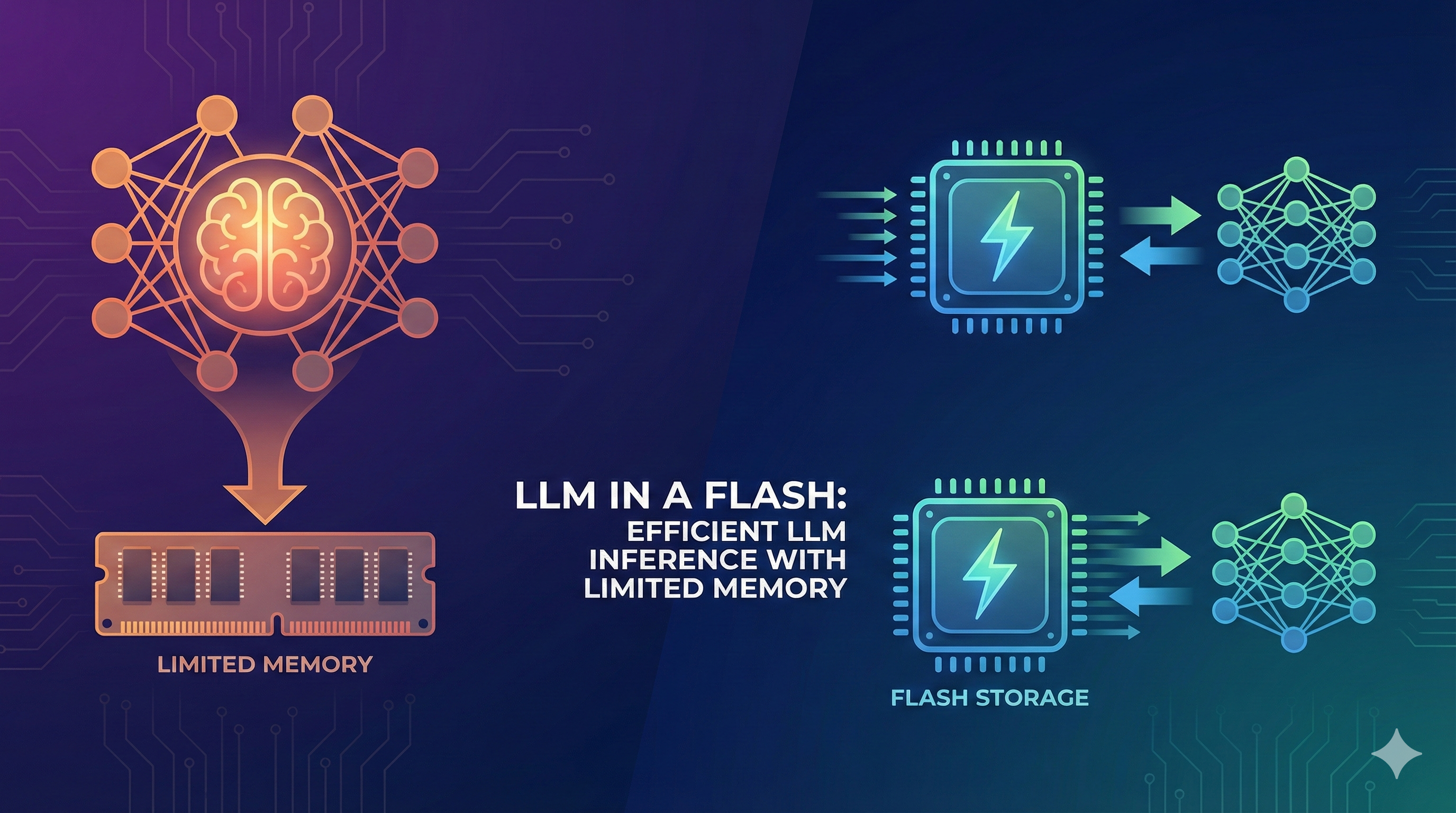

LLM in a Flash: Efficient LLM Inference with Limited Memory

Exploring techniques for running large language models efficiently on memory-constrained devices, optimizing inference without sacrificing performance.

Emerging Properties in Self-Supervised Vision Transformers: DINO Paper Summary

A comprehensive summary of the DINO paper exploring emerging properties in self-supervised vision transformers and their applications.

Technical Newsletter

Occasional deep dives on AI architecture, medical imaging, and Staff-level engineering. No spam, just technical value.

Join 2,400+ technical readers